Coord is generative music software where the user steers a rhythm or melody toward chosen musical targets in real time.

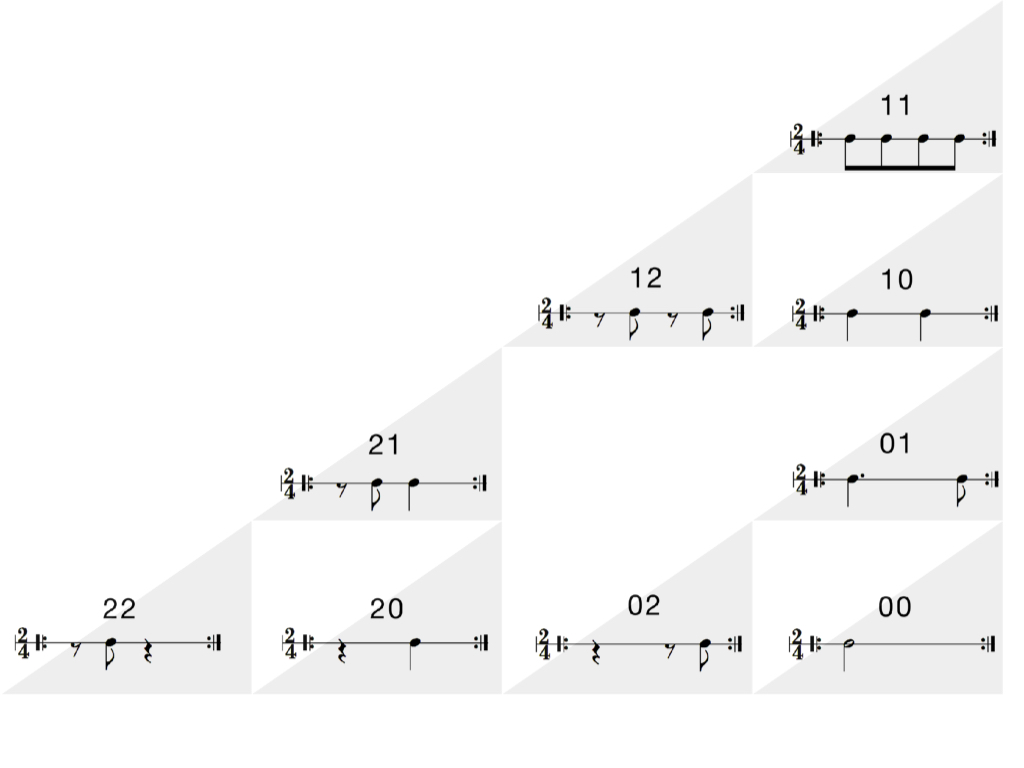

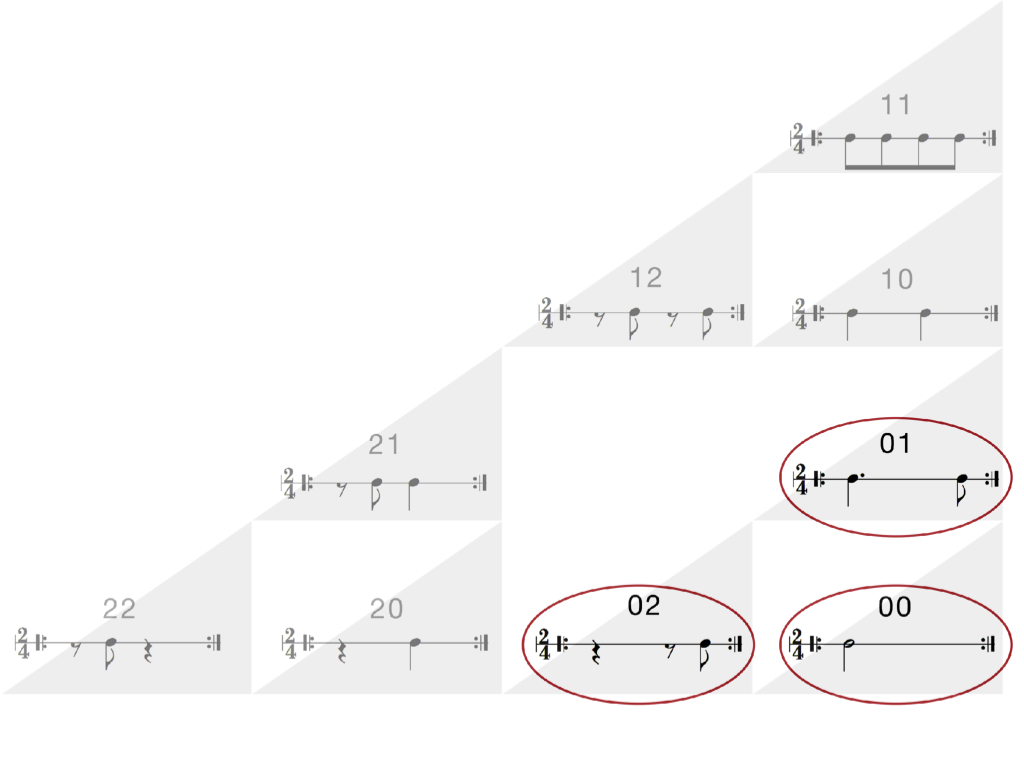

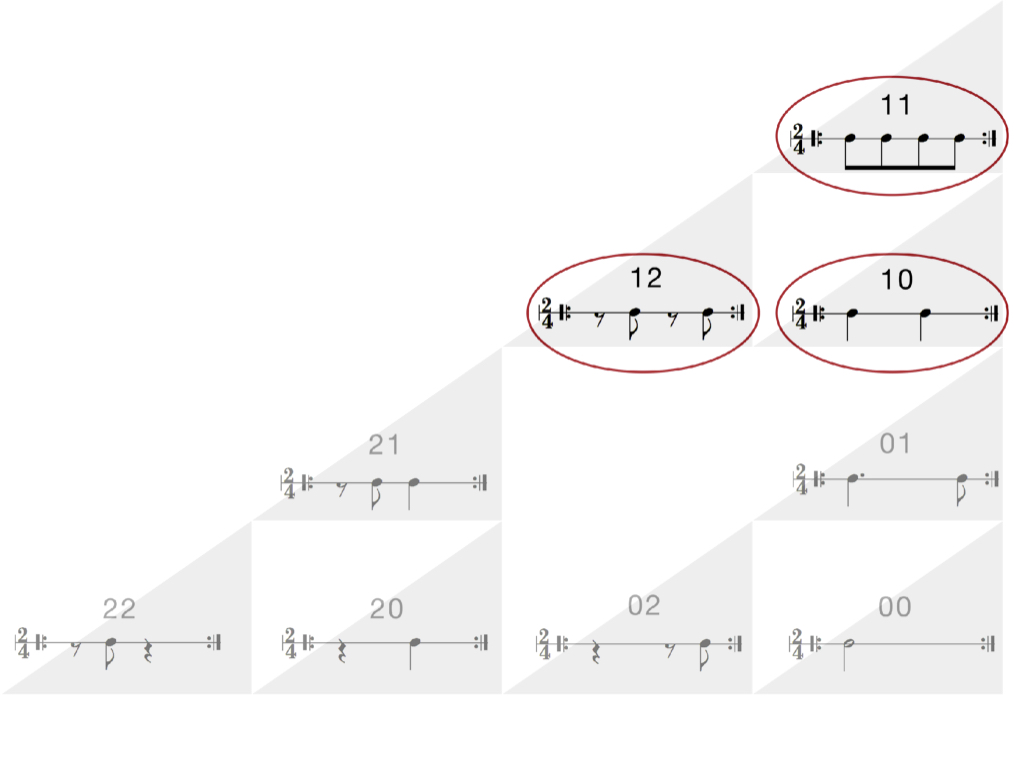

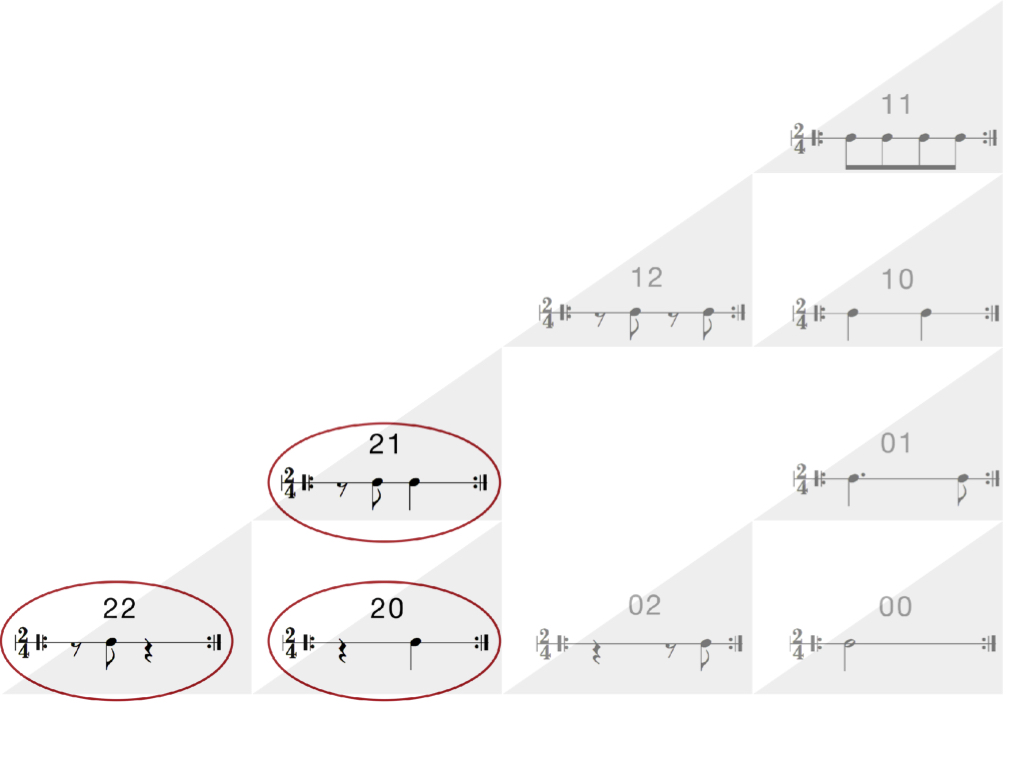

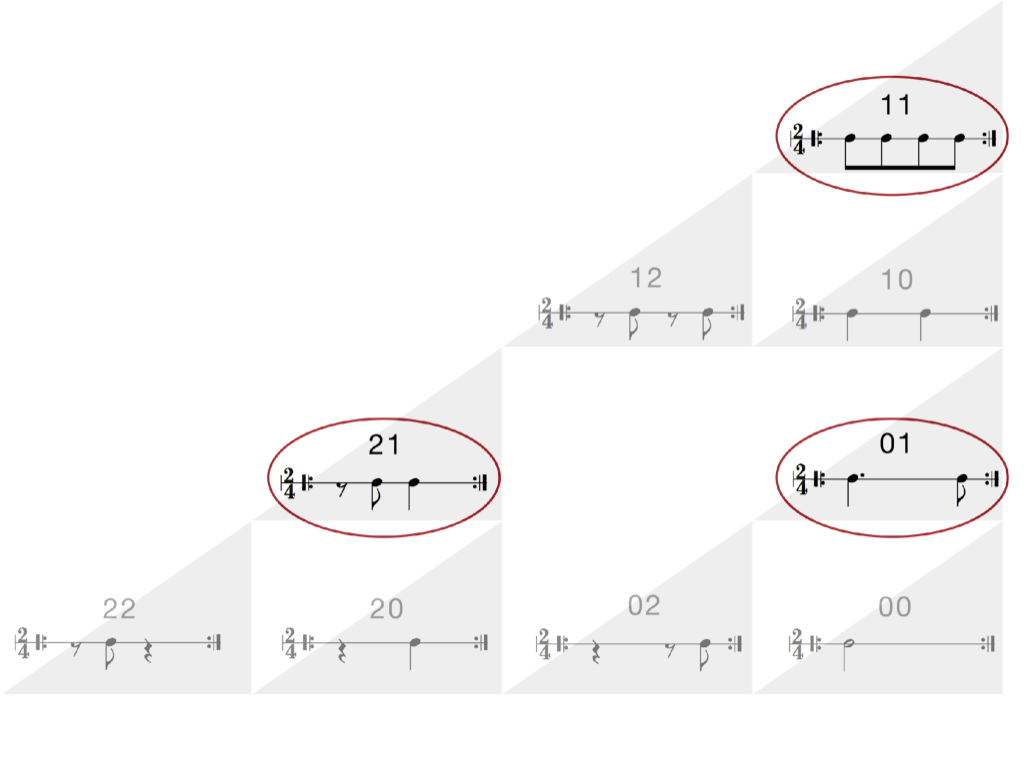

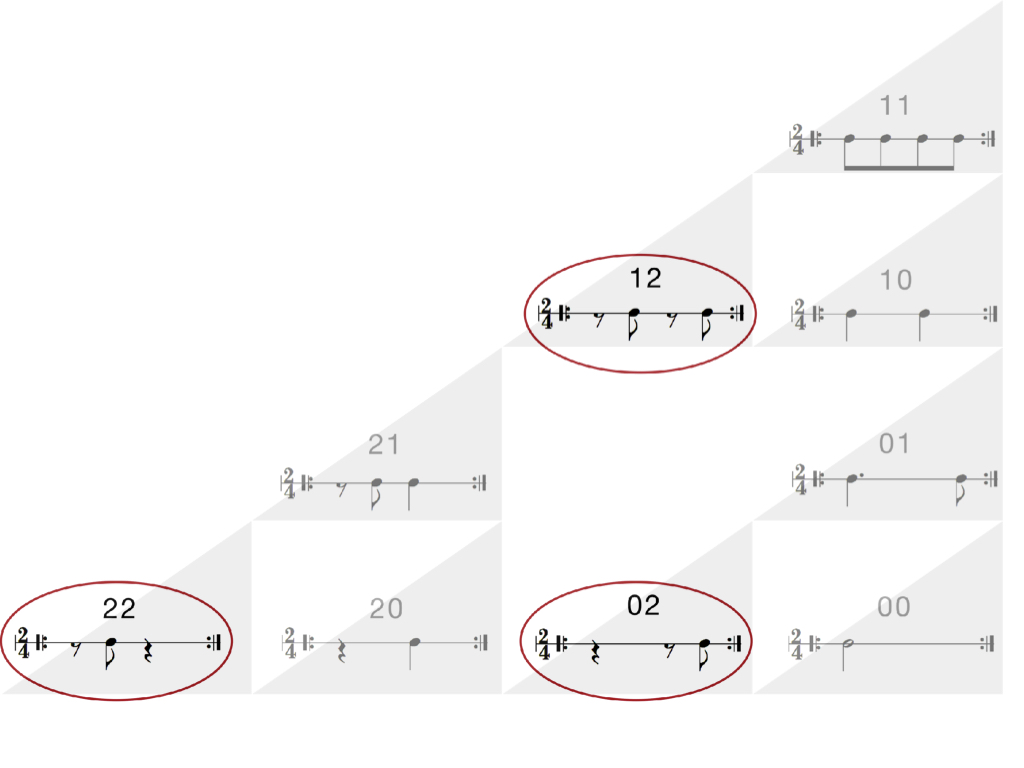

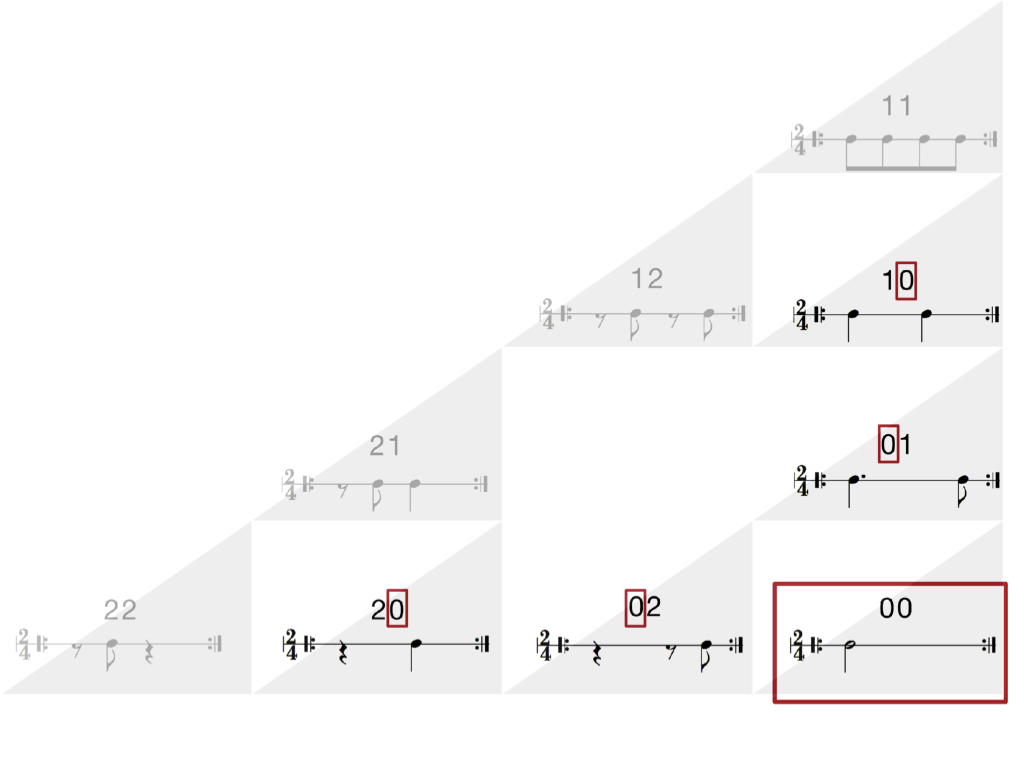

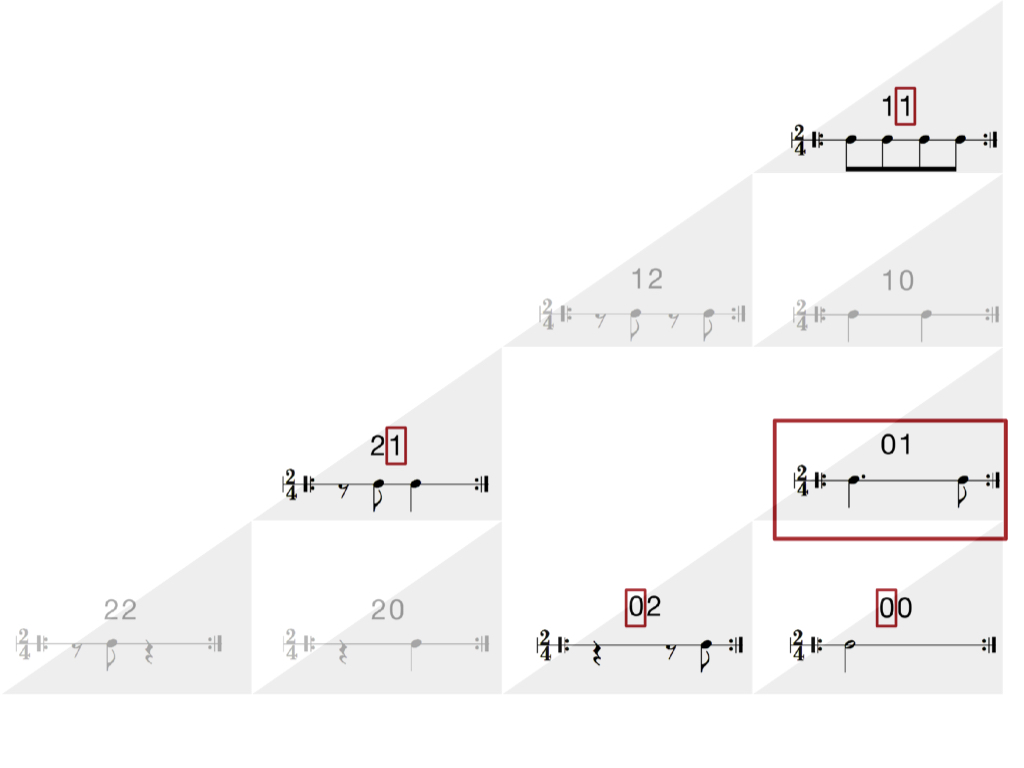

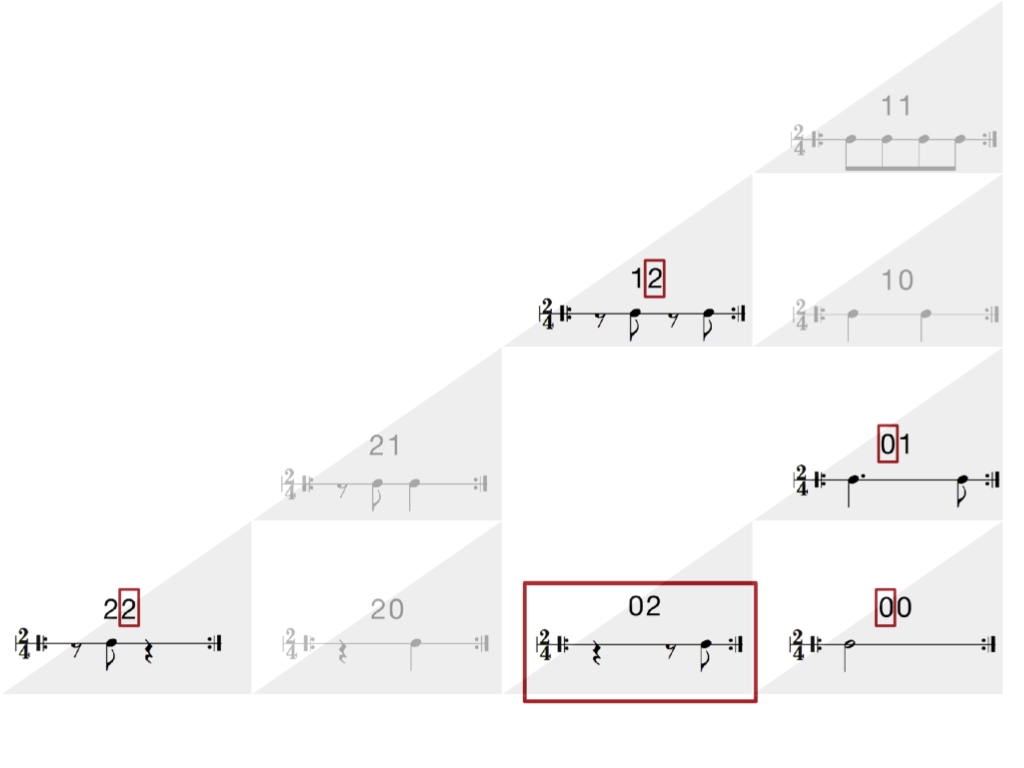

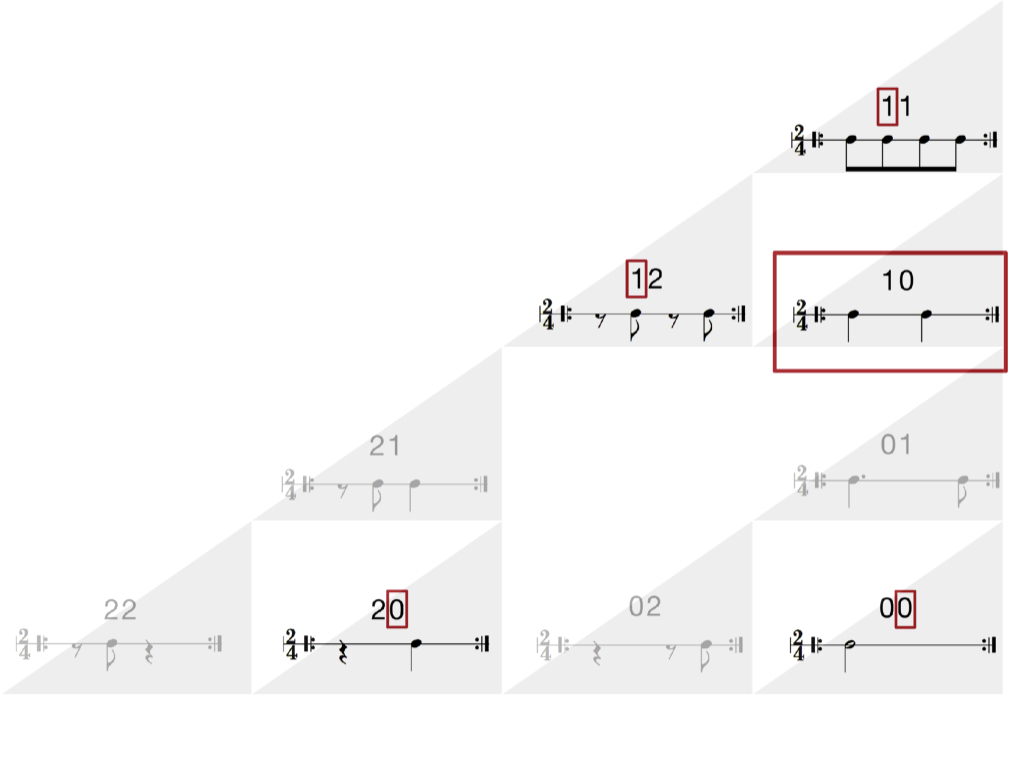

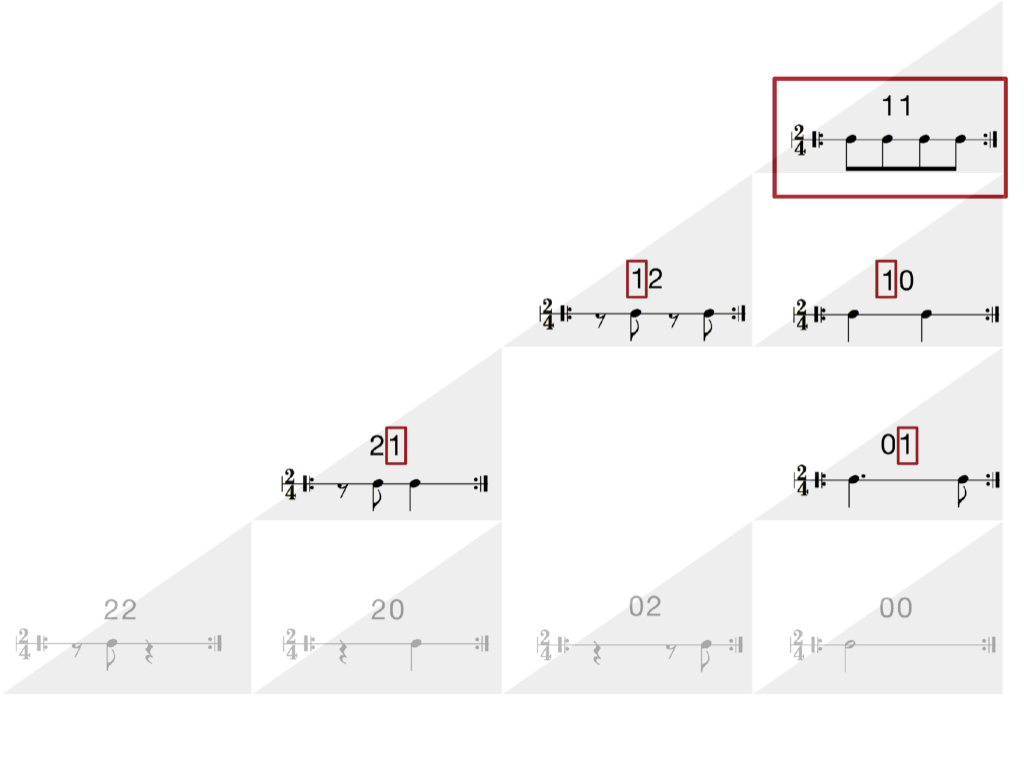

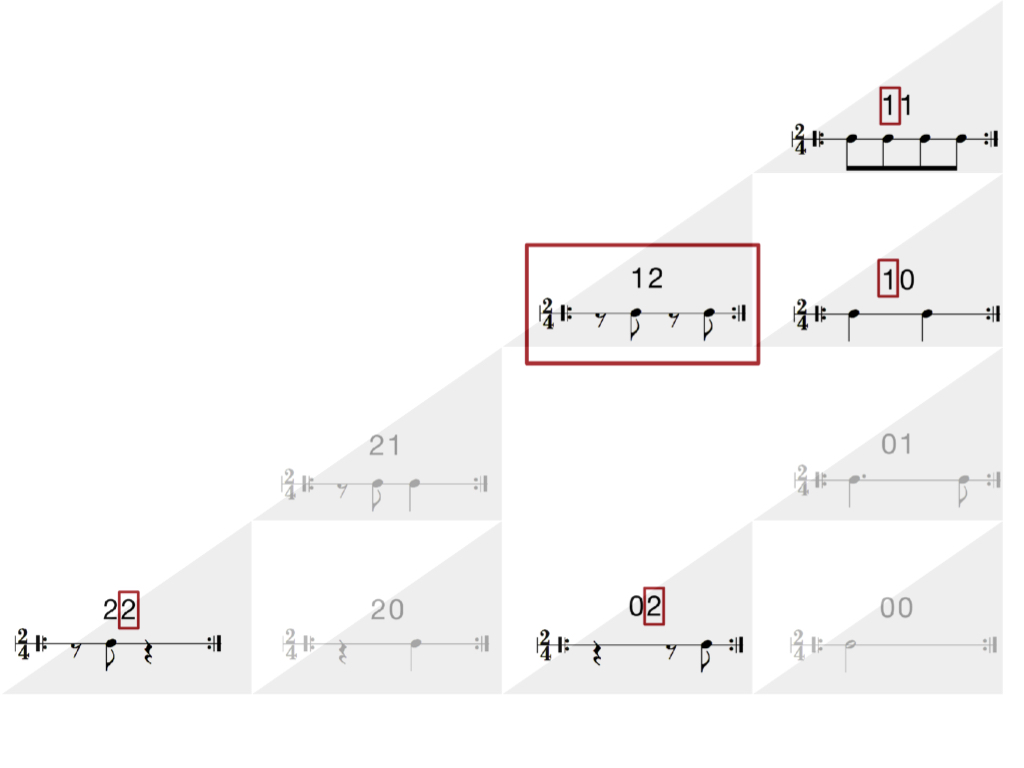

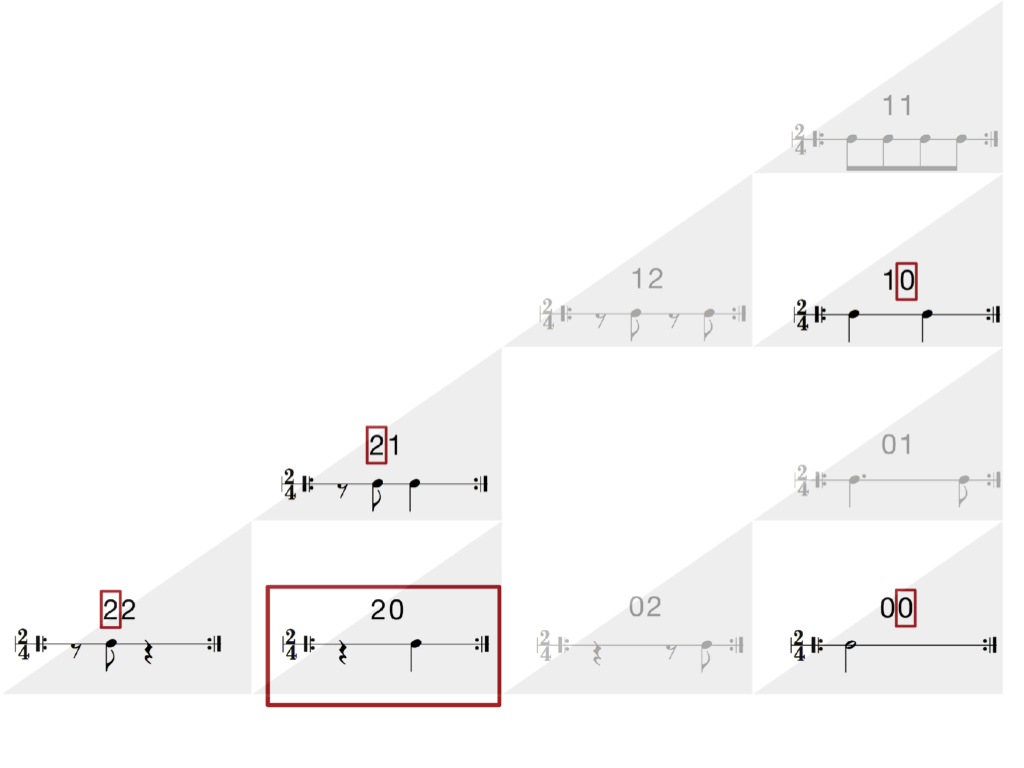

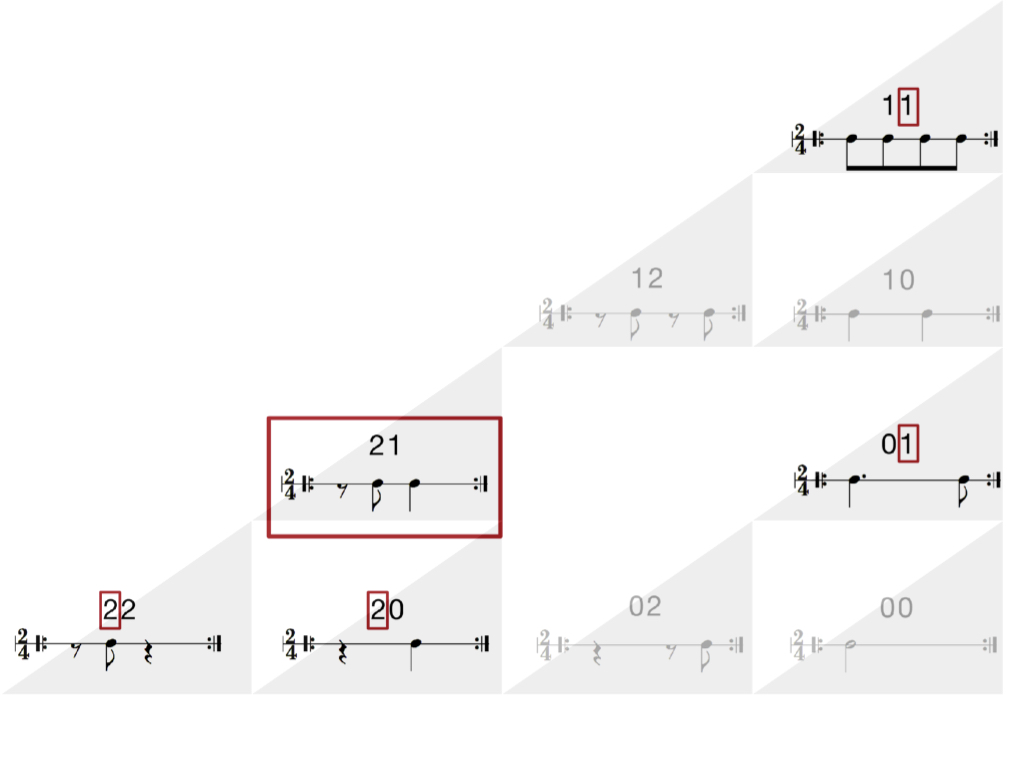

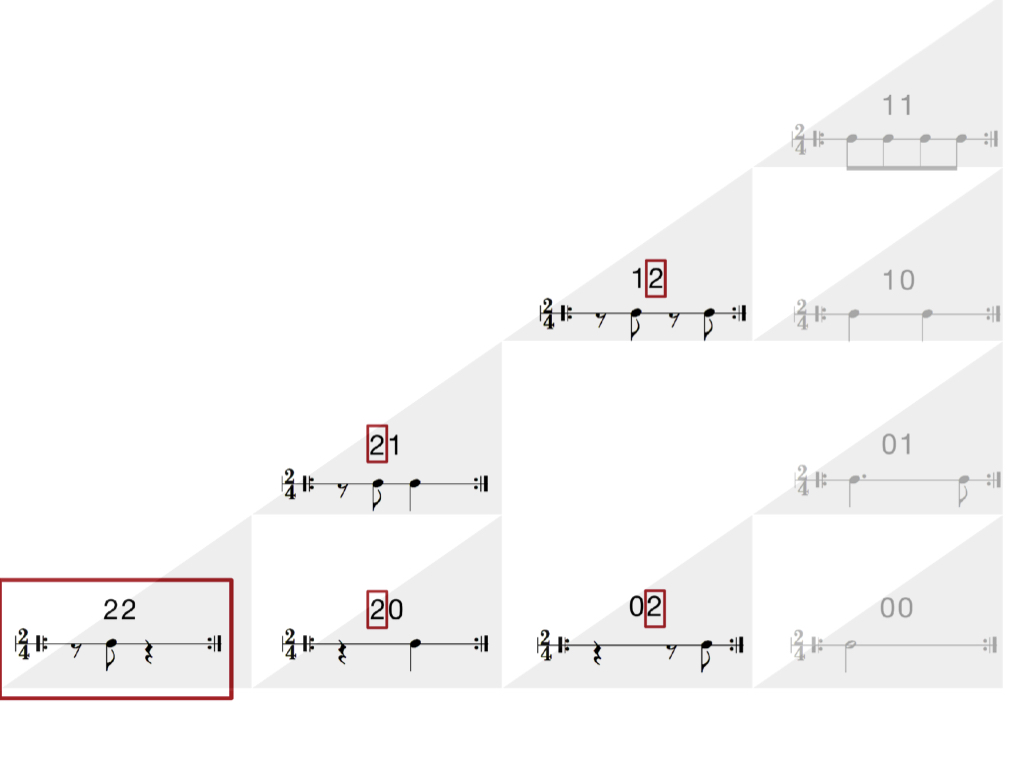

The aim is to improvise in a sense, by exploring patterns of musical anticipation and arrival. The algorithm uses rhythmic building blocks to model musical expectations and outcomes, and creates variations as functions of those building blocks.

This app operates on individual tracks, like a plugin.

Music elements and composition: Q Department

This example shows Coord generating variations on a lead synth track by morphing between three chosen target MIDI clips in Ableton Live, in the context of a (mostly static) larger composition. The apps and algorithm are implemented in Swift and integrated with Live via Max/MSP and OSC (Open Sound Control). The apps are additive and subtractive pattern composers where the same set of building blocks is used both for note patterns and muting patterns.

Details are in these journal/conference papers. An informal overview is in this article on Medium.

Music elements and composition: Q Department